Agentic coding workflows

-

@dannytaurus lol I burned through the 100 bucks Claude Max subscription in 3 days.

-

@Christoph-Hart Haha, you're in the honeymoon period!

And I suspect you're diving way deeper with it than most of us are.

-

@Christoph-Hart Woah I am gonna have to try this out

-

@HISEnberg sure I'm holding back about 50-60 commits with that stuff, but as soon as the MVP is solid I'll let you all play with this. The current feature I'm toying around with is the ability to create a list of semantic events that will create a automated mouse interaction:

{ { type: "click", id: "Button1", delay: 0 } { type: "drag", id: "Knob1", delta: [50, 60] , delay: 500 }, { type: "screenshot", crop: "Knob1" } }AI agents sends this list to HISE, HISE opens a new window with an interface, simulates the mouse interaction that clicks on button1 ( had to hack the low-level mouse handling in JUCE to simulate a real input device for that), then drags knob1 and makes a screenshot. Evaluates that the expected screenshot uses the code path of the LAF it has send to HISE previously, then realizes that the path bounds need to be scaled differently and retries it until the screenshot matches its expected outcome. If it just needs to know whether the knob drag updated the reverb parameter, it fetches the module list from HISE and looks at the SimpleReverb's attribute directly - this won't waste context window space for the image analysis.

It's that feedback loop that allows it to autonomously iterate to the "correct" solution (aka ralph loop), so even if it only gets it 90% of the time right, it will approximate to 99.9% after three rounds of this.

-

This was all a big surprise to wake up to.

Altar had quite a bit of vibe-coding involved, specifically on the modular drag-n-drop FX panels, and some of the third-party DSP nodes. I used various models available on Github Copilot (mostly Claude) because it seems to handle multiple files / larger context window better than the standalone models.

That being said, it still required quite a bit of manual fixing up. A common one mentioned above was using var instead of local with inline functions etc, and it would default to using JUCE buffers instead of the templated SNEX methods which I assume comes with a performance hit.

I think a good idea would be to establish some sort of global guiding prompt which includes some of the most important best practices for HISE and appending that to individual case-specific prompts eg:

"Use this

bestPractices.mdto familiarize yourself with the HISE codebase, remember to make use of the existing templated functions in the auto-generated files and only use JUCE methods as a fallback" etc etc -

@iamlamprey said in Agentic coding workflows:

"Use this

bestPractices.mdto familiarize yourself with the HISE codebase, remember to make use of the existing templated functions in the auto-generated files and only use JUCE methods as a fallback" etc etcthis.

Perhaps it might be worth thinking about how this is integrated in to the agents approach for every prompt it gets issued, a little like the MCP server interaction above...

Big warning: Im even more of a noob at this stuff than I think anyone here - I havent used AI for any coding work - so watch out I may be talking bullsh*t

-

I've used Antigravity to get myself up to speed with JUCE. I've built quite a few things outside of HISE using it. To the point where for my own company, I don't think I will need HISE. For freelance work, HISE is still a solid option. But I saw a competitor today show off his AI plugin generator, and it was very impressive.

In terms of HISE specific code I've been doing, certainly not entire namespaces or anything to do with the UI. But methods and functions here and there, that I knew what I wanted to do, but I just wanted AG to write it faster for me.

I've had more success with AG than ChatGPT on this stuff. Although ChatGPT is actually quite good at writing prompts.

And on a tagent slightly, but one of the biggest hurdles to writing complex projects in HISE is just how many languages and approaches you need to take command of. In C++ I can focus on JUCE and C++. In HISE, I need to learn HISEscript, C++, SNEX, simple markdown, and even css. This is a barrier to speed.

-

I played with Hise for a month last year and quickly learned that my brain doesn't have the interest and time to learn everything about scripting and development needed to create the semi complex plugins I'd like to; so I tried using LLMs with moderate success..

The most immediate issue I found has already been described here - the LLMs would continue to make the same basic scripting mistakes, assuming more standard Javascript. It can be reduced to some degree by providing the agents with strict rules and contexts, but they still revert to making an unacceptable number of the same basic mistakes that you've clearly instructed them not to, even when stating specific instructions directly within the prompts.

That's where it's been most obviously unusable for me; if I'm spending half the time reiterating the same basic Hise scripting errors back to the LLM, that's too much time and tokens wasted to achieve anything substantial. It's mostly the frustration of it that kills the motivation to continue, otherwise it has great potential!

I really hope this can be overcome to make plugin development viable for people like myself, who have the interest, but not necessarily the time and programming experience to achieve what we'd like to without the help of LLM agents.

It would be great to see Hise not only survive, but to potential thrive with AI. Hise has more going for it, that if updated with effective LLM scripting capacity would likely be worth the Hise and Juce license fees for me, over other free options.

Whoever implements AI plugin development the most efficient and appealing way will pocket our money at the end of the day.

BTW you can use the 'Recompile On File Change' Hise option along with an external editors Auto Save option to seamlessly auto compile plugins/scripts at the time you change window focus from external editor to Hise.

-

I can see cost being a massive restriction for using agents regularly. I've just burned through my limit with Claude Pro in a couple of hours. Now I have to wait for the lockout to reset in a few hours before I can continue or I have to switch to PAYG, and I'm already 12% through my weekly limit.

-

@David-Healey said in Agentic coding workflows:

I can see cost being a massive restriction for using agents regularly.

Try Google's Antigravity with the Gemini 3 Flash model set to Fast.

It's near the quality of their Pro models but with significantly more free limits.Claude Sonnet models have typically been the best I've experienced, but the gap is small now, even for Google's Gemini 3 Flash model, which people are actually preferring; just be prepared for it to once in a while randomly write 100 lines for a 5 line piece of code.

Claude's pricing is ridiculous.

I would be very hesitant to pay a subscription for any AI model when my input is training it, unless it happens to ultimately demonstrate consistent error free value. -

And on a tagent slightly, but one of the biggest hurdles to writing complex projects in HISE is just how many languages and approaches you need to take command of. In C++ I can focus on JUCE and C++. In HISE, I need to learn HISEscript, C++, SNEX, simple markdown, and even css. This is a barrier to speed.

Yup, I understand that, however nobody forces you to use CSS or markdown :) On the other hand, the LLM has no problem using different languages (assuming that I solve the knowledge gap problem to HiseScript and make the CSS parser a bit more standard conform), so I would say that if you don't understand CSS you can still use it through an LLM.

That's where it's been most obviously unusable for me; if I'm spending half the time reiterating the same basic Hise scripting errors back to the LLM, that's too much time and tokens wasted to achieve anything substantial. It's mostly the frustration of it that kills the motivation to continue, otherwise it has great potential!

Yes that is basically LLM integration 101: remove the friction point that it mixes up HiseScript and Javascript. I'm pretty confident that I'm 80% there with the MCP server and the latest models, but this will be an iterative process that will take some time.

But I agree that if HiseScript turns out to be a burden (hallucinates Javascript) instead of a opportunity (allow a fast & tight feedback loop) for LLMs then there will be smoother workflows from competitors, so this is a key problem for me to solve.

-

@Christoph-Hart When I started using ChatGPT to help me scale and optimize scripts in HISE over 2 years ago, I foresaw the very thing you are describing now as the future of HISE. I never got around to writing a post of my vision.

I didn't come to HISE because i wanted to learn coding. I had to grasp coding in order to make the instruments I wanted to make. Whatever is the most practical approach to archive a goal is the one I use.

I am delighted to hear about this new direction and the progress you are making. The more of a seamless, logical, frictionless, straightforward experience you make for us using HISE, the better the platform is. I'll code what I can when I have to, but I'm not here to code.

Ai has been an indispensable help for me with web stuff as well, helping me troubleshoot, fix, clean and optimize things.

Good on you, Christoph, for moving into this direction.

-

@Christoph-Hart said in Agentic coding workflows:

I'm pretty confident that I'm 80% there with the MCP server and the latest models

Hi Christoph.

Is there something ready to test regarding this?

With simple instruction for use with Google's Antigravity if possible..

-

@Bart yes the MCP server and LSP servers are fully functional, but I‘m only using Opencode with Claude here so I can‘t say how it works with Googles toolkit.

-

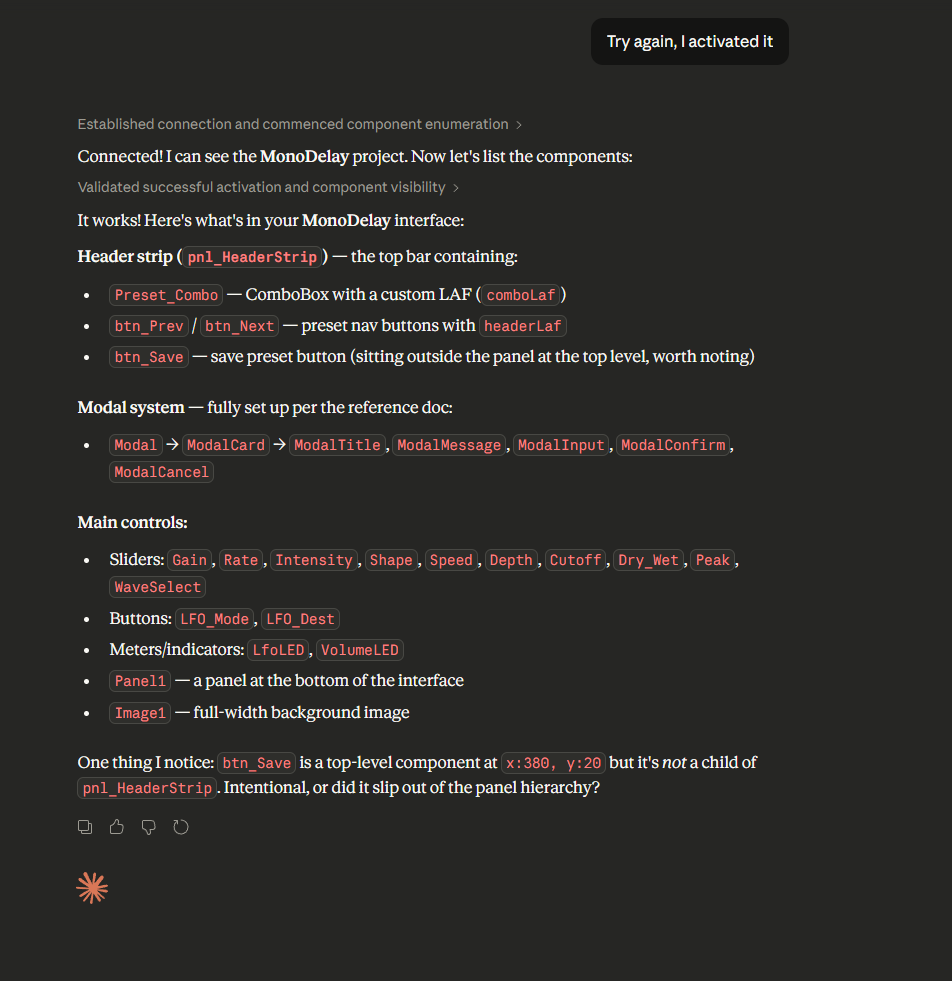

Really nice work man! Gonna play around with it. Its already mouthing me off on button placement

-

@Christoph-Hart How are you finding Opencode? I'm trying out a few but haven't landed on one I like best yet.

Cursor: love the chat history and better context management, but steering messages are janky

Claude Code: like the simplicity of single terminal window but not really the resulting view

Codex: love the split chat/diff layout and it has chat/task history too, best so far for meHaven't tried Opencode yet. Looks a lot like Claude Code in layout and style.

-

@dannytaurus I'm also thinking of trying the integration in Zed

So many choices, so little time. -

@David-Healey @dannytaurus .. Im just going to wait until you all decide what the best one then use that....

-

I actually haven't tried anything else than opencode - it sometimes crashes after sessions but that's the only thing that annoys me, the rest is close to perfection for my taste.

I just noticed that anything lower than Opus 4.6 or equivalent models from competitors is complete trash for almost every task I threw at it - a notable mention goes out to GLM5, which they advertise as a replacement to opus-type models but it spectacularly failed with a simple and deterministic refactoring job.

-

I finally had a chance to dig into the new setup this week and my head is still spinning with the possibilities. I agree with Christoph that there is something almost uncanny about Opus 4.6 (I'd add Sonnet 4.6 which I use for most tasks) when it comes to writing Hisescript and editing the source code. Next best models which I find produce useful enough results are GPT-5.4, and Gemini's new multimodal embedding model has piqued my interest as well.

It's still early days of trying out different workflows but what does everyone's setup look like currently? Are you still using the HISE script editor or do you find working out of a separate IDE more convenient at this point? I've been using Visual Studio for the most part launching Claude Code or Codex in Terminal. Admittedly, I still find HISE script editor too useful to completely separate with it...

On another, note how are you all getting along with specific HISE projects at this stage? One thing I am noticing is how much more aggressive Claude code can be on token usage if you don't prompt and direct it right. Previously I would consolidate all necessary information (Scripts, xmls, Dsp Files, scope of work, bug checklist, etc.) along with specific examples, API documentation, or source code from HISE, and create a new project with an LLM. This seems to have the added benefit of keeping the model more focused, faster, use less resources, and most importantly, retain memory. The main drawbacks are lack of project/HISE context and lack of writing to project.

So the MCP server approach does allow for read/write to the project and clearly performs significantly better, but requires careful prompting and project context to avoid significant token usage. Figuring out the right balance between comprehensive context and efficiency is the real challenge for me moving forward. I imagine this just boils down to reformatting my setup to include the right sort of skill, tools and spawning subagents in the right context.

I'll have to try out opencode as Chrsitoph suggests and using this to make edits to the HISE source code are next on my bucket list as well!