Multi-Mic Positions and Terminology

-

Hey guys, what are your definition of Mic positions? I'm coming from a Kontakt framework and my definition of Mic Position is having, say, a piano sample that has sample recordings of the ambient mic, the resonant mic, and a mic by the foot.

From a programming standpoint, are you using the term mic position to describe as eperate oscillator. Say for instance you have a sample library of an analog synth with four oscillators all going through AMP ADSR. Is this considered a 4-mic position library?

-

Ok, I think I got it. It's a way to merge different sample sets into one monolithic samplemap, so you can save on instances....Let me know if this is correct.

One question about this multimic feature. Does it require all of the samplesets to all have the same number of keygroups?

-

Hi, a mic position is exactly what you understand it to be. A set of samples recorded by a particular mic, if you use two mic placements in the recording session then you'll have two mic positions (these could be stereo or mono setups).

The merge mic feature allows you to "group" all of the samples together within HISE. So if you record a trumpet playing a C on three different mics then you can group those three different recordings into a multi-mic sample. This doesn't actually render any new audio files it just means HISE treats all three samples as a group and understands that they are exactly the same audio material just recorded on 3 different mics. You can still purge individual mic positions and control their parameters like volume and pan independently.

All of the samples for each mic must be the same length and you must have the same number of samples for each mic position. So if you have 5 close mic samples you must also have 5 stage mic samples. If you have differing numbers of samples for the different mics then you'll need to use multiple samplers.

More info - http://178.62.82.76:4567/topic/75/multimic-samples

-

This is exactly how I've been using the term with other sample libraries. Actually Multi-Mic Positions can save on CPU, because you don't have to instantiate a new sampler class for a new oscillator, if the oscillators have all of the same keygroups.

-

So can you tell me with the multi-mic positions, can each sibling have, say, different LFO, Filter Envelope, Pitch, Detune, FX, volume, etc...Is there some documentation on what each sibling has to share with the sampler and what can be individual to the sibling?

-

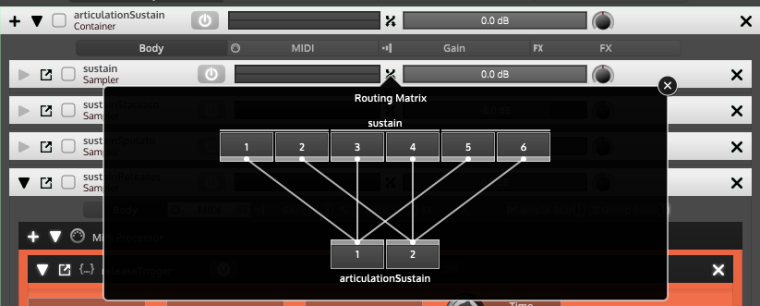

Yeah you can separate most things through the routing window. Have a look at this screenshot, in the routing matrix you can right click to set the number of channels. The routing window is available for most FX as well as samplers and containers so you just route the channels you want to the FX you want.

Might find these helpful

http://forum.hise.audio/topic/176/filter-with-multi-mic-samples/12

http://forum.hise.audio/topic/67/multi-channel-volume-and-pan-control

http://forum.hise.audio/topic/195/volume-meter-and-multi-mic-samples

http://forum.hise.audio/topic/190/multi-mics-rr-groups/12 -

I don't think the MultiMic concept is suitable for your use case as you can't use different LFOs/ modulators on different mic channels. The idea behind this is exactly to prevent having multiple LFOs and envelopes when you only need one. There are more limitations (the loop points must be equal) etc. so it's really only usable for multi-mic configurations (hence the name).

Just use multiple samplers, the overhead of having multiple instances should not be a big problem (or in your special case use multiple JUCE synthesisers which load the streaming sampler sounds).

You might want to experiment with the SynthesiserGroup module which basically allows to coallescate multiple samplers on the voice level which allows stuff like having one envelope for all AHDSR. But after all since you want to go full C++ anyway, you can just hardcode this structure and get the exact signal flow you want.